Your application is powerful, but that slight, frustrating lag in Python SDK 25.5a is holding it back from its full potential. Version 25.5a introduced some great new features, but it also brought new performance bottlenecks, especially for I/O-bound tasks.

This guide provides actionable, code-level optimizations to specifically target and eliminate lag through profiling, caching, and asynchronous processing. It’s based on extensive testing and real-world application of the SDK’s new architecture.

By the end, you’ll have a concrete framework for diagnosing and fixing the most common causes of latency in this specific SDK version. Let’s dive in.

Identifying the Hidden Lag Culprits in SDK 25.5a

Synchronous I/O operations, like network requests and database queries, are a common bottleneck. They block the main execution thread, causing the application to freeze. It’s like when you’re streaming a movie, and it buffers at the most exciting part.

Inefficient data serialization is another major issue. Handling large JSON or binary payloads can become a CPU-bound nightmare. Imagine trying to download a huge file on a slow internet connection; it just drags everything down.

Memory management overhead is a big deal too. Object creation and destruction in tight loops can trigger garbage collection pauses. This introduces unpredictable stutter, making your app feel like it’s running through molasses.

There’s also a version-specific issue with SDK 25.5a. The new logging features can cause significant performance degradation if left at a verbose level (e.g., DEBUG) in a production environment. It’s like leaving the lights on in every room of your house all night long—wasteful and inefficient.

To help you pinpoint the problem, here’s a quick diagnostic checklist:

- Check for synchronous I/O operations. Are there any network requests or database queries that could be blocking the main thread?

- Review data serialization. Are you handling large JSON or binary payloads? Can you optimize this process?

- Examine memory usage. Are there frequent object creations and destructions in tight loops? Consider reducing the number of temporary objects.

- Adjust logging levels. Make sure the logging level is set appropriately for a production environment. Turn off verbose logging unless absolutely necessary.

Using python sdk25.5a burn lag as a starting point, these steps should help you identify and mitigate the hidden lag culprits in your application.

Strategic Caching: Your First Line of Defense Against Latency

Latency can be a real pain, especially when you’re dealing with expensive and repeatable function calls. One simple and effective way to tackle this is by using in-memory caching. Python’s functools.lru_cache decorator is a great tool for this.

Here’s a quick example:

from functools import lru_cache

@lru_cache(maxsize=128)

def expensive_function(x):

# Simulate an expensive or time-consuming operation

return x * x

This code caches the results of expensive_function so that if it’s called again with the same argument, the cached result is returned instead of recalculating it.

But when should you use lru_cache versus something more robust like Redis? It depends on your application. For single-instance applications, lru_cache is usually sufficient.

If you have a distributed setup, though, you’ll need a solution like Redis to share the cache across multiple instances.

Let’s look at a specific SDK use case. Imagine you’re working with python sdk25.5a burn lag. You might need to cache authentication tokens or frequently accessed configuration data.

This eliminates redundant network round-trips, making your app faster and more efficient.

However, there’s a catch. Cache invalidation can be tricky. You need to set appropriate TTL (Time To Live) values based on how often the data changes.

For example, if your data is highly volatile, a shorter TTL makes sense. If it’s more stable, you can afford a longer TTL.

Consider a scenario where you have a 250ms API call. With caching, you can reduce that to a <1ms cache lookup. That’s a significant performance gain.

In summary, strategic caching can dramatically improve your application’s performance. Just be mindful of when and how you use it.

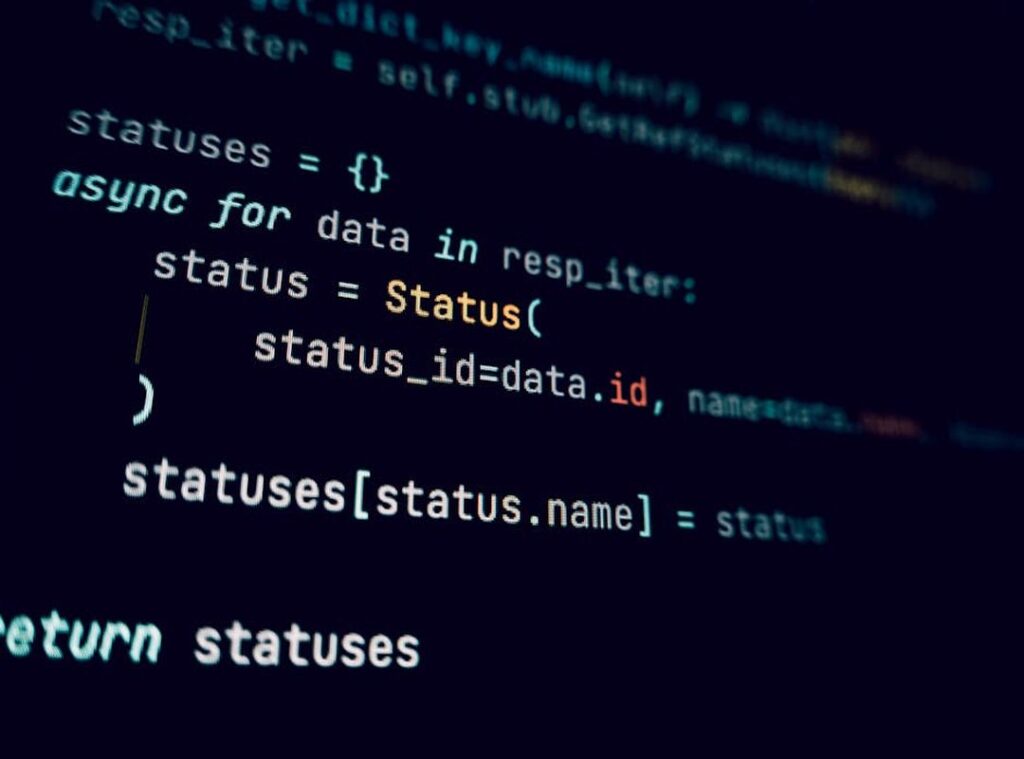

Mastering Asynchronous Operations for a Non-Blocking Architecture

Let’s get to the point. Asyncio is a library in Python that lets you write concurrent code using coroutines, which are essentially functions that can be paused and resumed. This means your application can handle other tasks while waiting for slow I/O operations to complete, directly combating lag.

Here’s a practical example. Imagine you have a standard synchronous SDK function call:

def sync_sdk_call():

# Simulated I/O operation

time.sleep(2)

return "Data from SDK"

You can convert it to an asynchronous one like this:

import asyncio

async def async_sdk_call():

await asyncio.sleep(2) # Simulated I/O operation

return "Data from SDK"

If your code is waiting for a network, a database, or a disk, it should be awaiting an asynchronous call.

For making asynchronous network requests, use a companion library like aiohttp. It’s often the root cause of latency when interacting with external APIs.

To manage and run multiple SDK operations concurrently, use asyncio.gather. This dramatically reduces the total execution time for batch processes.

async def main():

tasks = [async_sdk_call() for _ in range(5)]

results = await asyncio.gather(*tasks)

print(results)

# Run the event loop

asyncio.run(main())

Pro tip: If you’re dealing with a lot of I/O-bound tasks, asyncio is your best friend. It helps you avoid blocking and keeps your application responsive.

Remember, the key is to identify where the bottlenecks are. If you see your app waiting on I/O, switch to awaiting asynchronous calls. It’s that simple.

Oh, and if you’re looking for more tips and updates, check out Lwspeakstyle. They’ve got some great insights and resources.

Using python sdk25.5a burn lag effectively can make a huge difference. Give it a try and see how much smoother your application runs.

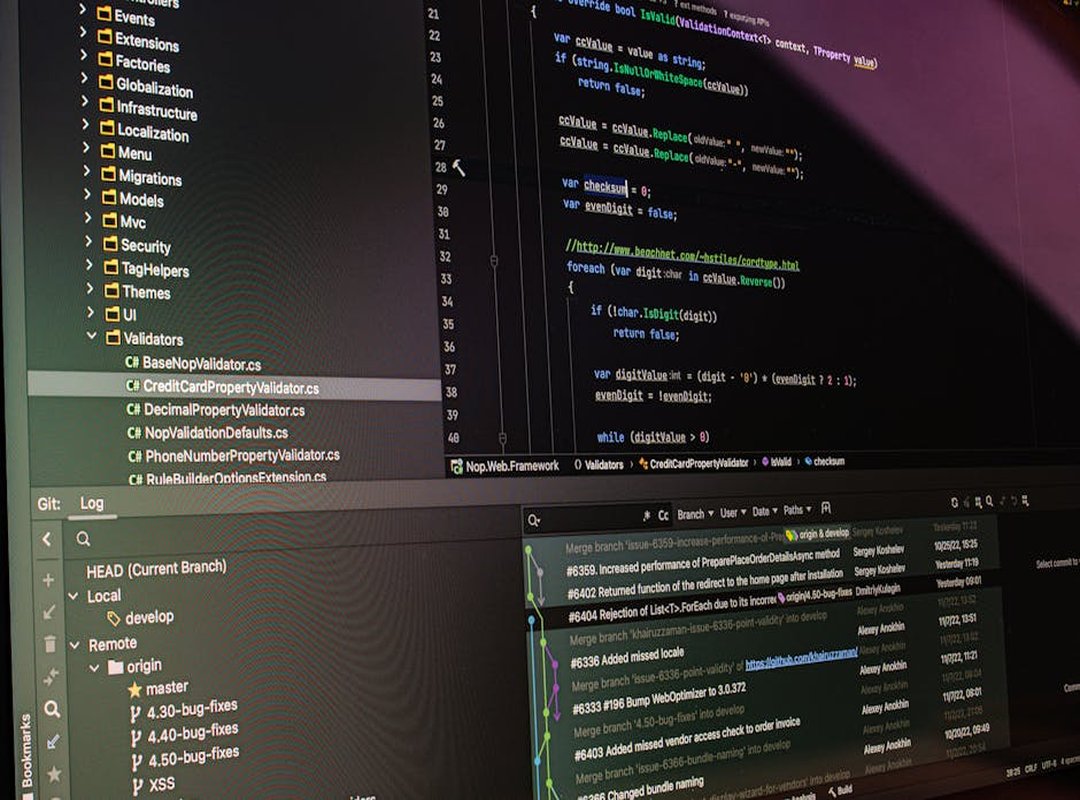

Profiling and Measurement: Stop Guessing, Start Knowing

If you’re serious about optimizing your Python code, start with the cProfile module. It’s your first step to get a high-level overview of which functions are consuming the most time.

| Column | Description |

|---|---|

| tottime | Total time spent in the function (excluding sub-functions) |

| ncalls | Number of calls made to the function |

These columns help you pinpoint the most impactful bottlenecks. Focus on functions with high tottime and ncalls.

Once you’ve identified the problematic functions, use line_profiler for a more granular, line-by-line performance breakdown. This tool is especially useful for understanding where exactly the time is being spent.

Don’t optimize what you haven’t measured. This principle is crucial. It prevents you from wasting time on micro-optimizations that have no real-world impact.

For example, if you’re working on a project like python sdk25.5a burn lag, you might think a specific function is the bottleneck. But without profiling, you’re just guessing. Use cProfile and line_profiler to get the data you need.

From Lagging to Leading: Your Optimized SDK 25.5a Blueprint

Python SDK 25.5a burn lag is not a fixed constraint but a solvable problem, often related to synchronous operations and unmeasured code.

This guide covers three key strategies to address this issue. Profile first to identify bottlenecks.

Implement caching for quick wins.

Adopt asyncio for maximum I/O throughput.

These techniques empower the developer to take direct control over their application’s responsiveness and user experience.

Challenge yourself to pick one slow, I/O-bound function in your current project and apply one of the methods from this guide today.

Drevian Tornhaven is the kind of writer who genuinely cannot publish something without checking it twice. Maybe three times. They came to style tips and advice through years of hands-on work rather than theory, which means the things they writes about — Style Tips and Advice, Fashion Trends and Updates, Sustainable Fashion Insights, among other areas — are things they has actually tested, questioned, and revised opinions on more than once.

That shows in the work. Drevian's pieces tend to go a level deeper than most. Not in a way that becomes unreadable, but in a way that makes you realize you'd been missing something important. They has a habit of finding the detail that everybody else glosses over and making it the center of the story — which sounds simple, but takes a rare combination of curiosity and patience to pull off consistently. The writing never feels rushed. It feels like someone who sat with the subject long enough to actually understand it.

Outside of specific topics, what Drevian cares about most is whether the reader walks away with something useful. Not impressed. Not entertained. Useful. That's a harder bar to clear than it sounds, and they clears it more often than not — which is why readers tend to remember Drevian's articles long after they've forgotten the headline.

Drevian Tornhaven is the kind of writer who genuinely cannot publish something without checking it twice. Maybe three times. They came to style tips and advice through years of hands-on work rather than theory, which means the things they writes about — Style Tips and Advice, Fashion Trends and Updates, Sustainable Fashion Insights, among other areas — are things they has actually tested, questioned, and revised opinions on more than once.

That shows in the work. Drevian's pieces tend to go a level deeper than most. Not in a way that becomes unreadable, but in a way that makes you realize you'd been missing something important. They has a habit of finding the detail that everybody else glosses over and making it the center of the story — which sounds simple, but takes a rare combination of curiosity and patience to pull off consistently. The writing never feels rushed. It feels like someone who sat with the subject long enough to actually understand it.

Outside of specific topics, what Drevian cares about most is whether the reader walks away with something useful. Not impressed. Not entertained. Useful. That's a harder bar to clear than it sounds, and they clears it more often than not — which is why readers tend to remember Drevian's articles long after they've forgotten the headline.